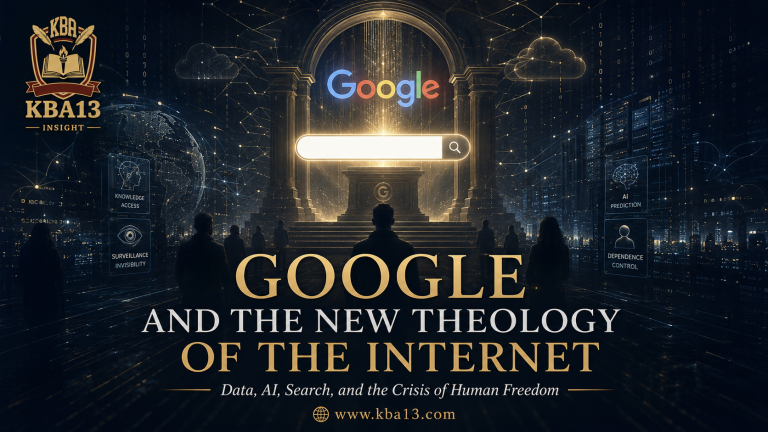

Cloud infrastructure and data centers symbolizing the hidden material power behind Google and the internet.

Introduction: When Human Beings Began to Pray Through a Search Bar

Google is no longer merely a search engine. In the digital age, Google has become one of the most powerful systems shaping how human beings search, remember, think, move, consume, publish, and become visible. Through data, artificial intelligence, cloud infrastructure, advertising, search ranking, and digital memory, Google has become a quiet architecture of modern life. This essay examines Google as a symbol of digital power and asks one central question: what happens to human freedom when knowledge itself passes through a platform?

There was a time when human beings looked upward in times of uncertainty. They asked the sky, the scriptures, the elders, the philosophers, the shamans, the scholars, or the silence within the heart. Modern human beings now lower their heads toward a screen. The gesture has changed, but the spiritual structure remains strangely familiar. A question appears. A search bar receives it. A list of answers descends. The old world waited for revelation. The new world waits for the loading speed.

Google did not arrive as a prophet. Google arrived as a tool. That is why modern society failed to notice the scale of its transformation. Tools are welcomed because they appear harmless. A hammer does not ask for devotion. A map does not ask to be loved. A dictionary does not record the anxiety behind the word being searched. Google began with a promise of simplicity: type anything, find something. From that promise emerged a new civilization of dependency. Human beings no longer search only on Google. They increasingly think through Google, move through Google, remember through Google, advertise through Google, publish through Google, and disappear when Google refuses to see them.

Google is “godlike” because of data, reach, cloud infrastructure, artificial intelligence, monopoly power, content gatekeeping, and its ability to track and influence human behavior. The language is deliberately excessive, but the excess hides a serious question. What happens when a private company becomes the most common entrance to knowledge? What happens when visibility, memory, movement, desire, commerce, and attention pass through a corporate system that most users barely understand? What happens when the modern soul, proud of its freedom, voluntarily places its questions inside a machine that remembers almost everything?

To say that Google is a god is, of course, not theology. It is a diagnosis. Google does not create the universe, but Google increasingly arranges the universe that appears before us. Google does not judge the soul, but Google can judge relevance. Google does not forgive sin, but Google can bury a page, suspend an account, demonetize a channel, redirect traffic, or make an institution invisible to the public. Google does not demand worship, but Google receives daily rituals from billions of people. Every query is a small act of dependence. Every click teaches the system. Every search becomes a confession without the language of confession.

The modern world did not lose religion as completely as it thinks. It simply changed the architecture of belief. Instead of altars, it built interfaces. Instead of priests, it trusted engineers. Instead of sacred texts, it accepted terms of service. Instead of miracles, it celebrated instant results. Instead of divine omniscience, it accepted data extraction. Instead of prayer, it typed keywords.

The question, therefore, is not whether Google is literally divine. The question is why modern life has created institutions that increasingly resemble secular gods: invisible, everywhere, intimate, predictive, feared, useful, and almost impossible to escape.

The First Commandment of the Digital Age: Be Searchable

In the old world, existence did not require indexing. A person could live, speak, write, teach, love, fail, and die without being ranked. A village could exist without appearing on a map application. A scholar could be respected without mastering search visibility. A business could survive through trust, reputation, and human memory. That world has not fully disappeared, but its authority has weakened. Today, to exist socially, commercially, and intellectually, one must be searchable.

This is the first hidden commandment of the digital age: be searchable or be forgotten.

Google did not merely help people find information. Google changed the meaning of being found. A website that does not appear on Google is not simply hard to access. It is almost non-existent in the practical imagination of the public. A writer whose name is not indexed loses part of their intellectual life. A university program without search visibility becomes provincial even if its scholarship is strong. A think tank without digital discoverability becomes a voice speaking into a sealed room. In this world, invisibility is not caused only by censorship. Invisibility can be produced by a poor ranking.

This is one of Google’s most profound forms of power. It does not need to say, “This exists” and “This does not exist.” It simply arranges what appears first, what appears later, and what never reaches the eyes of the ordinary reader. The difference between the first result and the fiftieth result is not merely technical. It is civilizational. Human beings rarely travel far into the wilderness of search results. They trust the first page as if relevance were a moral category.

Here lies the quiet danger. Google’s ranking system is not merely a convenience. It becomes an epistemological machine. It shapes what appears credible before the reader has begun to think. It establishes a hierarchy of attention. It rewards some forms of writing, structure, authority, speed, and optimization while punishing others. A civilization that once fought over truth now fights over visibility. The winner is not always the wisest. The winner is often the most legible to the machine.

Yet the irony is sharper than a simple accusation. Google became powerful because the internet was chaotic. Without search engines, the web would be an endless desert of disconnected fragments. Google gave an order to the chaos. It rescued users from informational darkness. It made the web navigable. It democratized access in ways older institutions never did. The problem is not that Google brought order. The problem is that one order became too dominant, too intimate, and too deeply embedded in the daily act of knowing.

A library classifies books. Google classifies reality as encountered by billions of people. That difference changes everything.

Data as the New Form of Omniscience

Every civilization has imagined a power that knows what human beings cannot hide. In religious imagination, this knowledge belonged to God. In modern bureaucratic states, it belonged to archives, police files, census records, intelligence reports, and administrative documents. In the age of Google, knowledge is no longer collected only through official institutions. It is harvested through ordinary life.

Search is not a neutral act. A person searching for symptoms, debt relief, divorce law, political conflict, religious doubt, migration routes, war news, or psychological pain is not merely seeking information. That person is revealing a condition of life. A search query may contain fear before fear becomes speech. A YouTube history may reveal loneliness before the person admits it. A location pattern may reveal habits more honestly than autobiography. Digital behavior often tells the truth before language becomes brave enough to confess it.

This is why data matters. Data is not just information. Data is human life translated into patterns. When collected at scale, those patterns become predictive powers. The system does not need to know the full human being. It only needs enough signals to anticipate behavior. The machine does not need wisdom. It needs probability. It does not need to understand the soul. It needs to know which result, advertisement, video, route, or suggestion is most likely to prompt the next action.

This is the difference between human knowledge and platform knowledge. Human knowledge is often slow, interpretive, incomplete, and moral. Platform knowledge is fast, statistical, cumulative, and operational. It does not ask, “What is the meaning of this life?” It asks, “What is this user likely to do next?” That question appears modest, but it is the foundation of enormous power.

Google’s strength lies not only in collecting data, but in making data useful. Data without interpretation is storage. Data with machine learning become predictions. Data with advertising becomes money. Data with search becomes the authority. Data with maps becomes movement. Data with video becomes attention-grabbing. Data with cloud infrastructure become dependencies. Data with artificial intelligence becomes an architecture of anticipation.

Human beings often imagine surveillance as a camera pointed at them. That image is too crude. The deeper form of surveillance is not always watching. It is learning. It learns the rhythm of the user. It learns language preferences, ideological curiosity, emotional cycles, shopping interests, professional needs, aesthetic tastes, and political vulnerability. The system may not know love, but it can infer loneliness. It may not know faith, but it can detect religious searching. It may not know despair, but it can recognize patterns of crisis.

The old gods saw everything because they were divine. The new platforms see a lot because people feed them willingly.

The Search Engine as Confessional Booth

Modern people often believe they are more private than earlier generations because they no longer confess before religious authorities. Yet they confess constantly to machines. They confess through search queries, drafts, deleted phrases, watched videos, map routes, saved passwords, calendar entries, photo backups, and voice commands. The digital confession does not require shame. It requires convenience.

The search bar has become the most honest room in modern life. People may lie to family, colleagues, students, spouses, governments, and themselves. But people rarely lie to a search engine. They ask what they genuinely want to know. They reveal what they fear. They search what they cannot say aloud. They type the question before they have formed the courage to speak it.

This creates a strange intimacy. Google becomes close not because users love it, but because users need it. Need is stronger than affection. Nobody has to emotionally admire a platform to depend on it. A person may criticize Google in public and use it five minutes later to verify a fact, find an address, translate a phrase, locate a book, understand a disease, or search for a forgotten name. This is how modern power works. It survives critique because it is functional.

The confessional metaphor becomes uncomfortable because confession traditionally involved moral accountability. A person confessed in order to be transformed. Digital confession produces a different result. The system receives the confession, stores its signals, improves predictions, and may return an advertisement, a recommendation, a ranked answer, or another pathway deeper into the ecosystem. Confession no longer ends in absolution. It ends in personalization.

This does not mean every act of data processing is sinister. Many users genuinely benefit from personalized services. Better maps save time. Search history improves relevance. YouTube recommendations can educate. Spam filters protect. Translation helps cross languages. Accessibility tools open doors. The modern digital system is powerful precisely because it is useful. If Google only harmed users, users would flee. The more difficult problem is that Google helps users while also making them more dependent.

Dependency rarely announces itself. It grows through daily usefulness. At first, the user says, “This helps me.” Later, the user says, “I cannot function without this.” Between those two sentences lies the history of modern digital surrender.

Google Does Not Control the World; It Organizes the Doorway

Crude criticism says Google controls the world. That is too simplistic. Google does not control everything. States still wage wars. Markets still collapse. Religions still mobilize communities. Universities still produce knowledge. Families still shape moral worlds. Human beings still resist, misunderstand, improvise, and disobey. Google is not an absolute ruler.

But Google organizes one of the most important doorways into the world.

That distinction matters. Power does not always mean direct command. Power often means structuring access. The person who controls the gate does not need to own the city. The person who controls the road does not need to own every destination. The person who controls the map can shape how travelers imagine the landscape. Google’s power operates in this manner. It does not own all knowledge, but it mediates access to much of it. It does not write every article, but it affects which articles are found. It does not produce every desire, but it helps route desire toward products, videos, services, and explanations.

In this sense, Google is not a king sitting on a throne. Google is closer to an atmosphere. One does not always notice an atmosphere because one breathes through it. That is precisely why atmospheric power is hard to confront. People notice oppression when it comes as violence. They rarely notice dependency when it comes as a convenience.

The modern user enters the internet through pathways already arranged by invisible systems. Search results, autocomplete, snippets, maps, ads, image results, AI summaries, and video recommendations form a soft architecture around perception. The user still chooses, but choices are presented within an environment designed by others. Freedom remains, but it becomes freedom inside a curated corridor.

This is not the end of human agency. It is the reshaping of agency. A person can still think critically, compare sources, use other tools, clear data, change settings, and resist manipulation. But such resistance requires discipline. The default condition is passive trust. Most users do not audit algorithms. They accept results. They do not investigate ranking systems. They click. They do not study the political economy of search. They ask another question.

The doorway remains open. But the architecture of the doorway is not innocent.

The Empire of Defaults

One of the most underestimated forces in modern life is the default. Human beings like to imagine themselves as active decision-makers. In reality, much of life is governed by what appears first, what is already installed, what requires the least effort, what feels familiar, and what everyone else seems to use. The default is not merely a setting. It is a philosophy of power.

Google understood the empire of defaults. To become the default search engine, default map, default video platform, default browser habit, default email habit, or default analytic instrument is to enter society’s unconscious layer. Once something becomes a default, it no longer needs to persuade every day. It becomes the beginning point.

This is why platform power cannot be measured only by market share. It must be measured by habit share. A user may know alternatives exist, but knowledge of alternatives is not the same as migration. Switching costs are not always financial. Sometimes they are emotional, practical, social, and cognitive. People remain where their passwords are saved, where their files are stored, where their contacts are synchronized, where their videos are subscribed to, where their history is remembered, and where their devices already point.

The empire of defaults also explains why modern monopoly is difficult to fight. Old monopolies arose from scarcity. A company controlled supply, raised prices, and consumers felt trapped. Digital monopolies often appear as an abundance. The service is fast, free, elegant, and useful. The trap is not visible because the door is not locked. The user is free to leave, but everything has been designed to make staying easier.

The genius of this arrangement is that domination does not look like domination. It looks like good design.

This is the new political grammar of technology. Power no longer needs to shout. It only needs to be frictionless. The smoother the experience, the fewer users ask what the experience costs. The more beautiful the interface, the less visible the extraction beneath it. The more accurate the recommendation, the less disturbing the surveillance that made accuracy possible.

Modern power does not always wear a uniform. Sometimes it wears a clean homepage.

The Cloud Is Not in the sky.

The word “cloud” is one of the greatest metaphors ever sold to the public. It suggests lightness, distance, softness, and natural beauty. A cloud floats. A cloud drifts. A cloud does not frighten. But the digital cloud is not floating in the sky. It is buried in the earth, cooled by water, powered by energy, protected by security systems, connected by cables, and owned by corporations.

This matters because whoever controls infrastructure controls possibilities. Search power is visible because users interact with it directly. Cloud power is less visible because it operates behind the screen. Yet infrastructure often matters more than interface. The public sees the website, the application, the video, the document, the email, the map. Behind all this stands a vast material system that stores, processes, authenticates, secures, and distributes digital life.

Google’s cloud infrastructure is part of a wider transformation in which private technology companies increasingly provide the backbone of public, commercial, academic, and cultural activity. This creates efficiency, but also dependency. A university may depend on cloud tools. A business may depend on analytics. A creator may depend on YouTube. A publisher may depend on search traffic. A government office may depend on digital services. A social movement may depend on discoverability.

At that point, the distinction between private service and public infrastructure becomes unstable. A platform may be legally private, commercially operated, and globally consequential at the same time. This hybrid character creates one of the hardest governance problems of the twenty-first century. How should societies regulate private systems that perform public functions? How should democratic accountability work when essential digital pathways are owned by companies rather than citizens?

The old state had ministries, courts, archives, roads, and borders. The new digital order has platforms, protocols, servers, app stores, cloud contracts, and ranking systems. Power has not disappeared. It has migrated into technical forms.

The tragedy is that public debate often arrives too late. Society celebrates innovation first, becomes dependent second, notices concentration third, and asks ethical questions fourth. By then, the system is already woven into everyday life.

Artificial Intelligence and the End of Innocent Search

Search once required a question. Artificial intelligence changes the sequence. It does not merely answer. It anticipates, summarizes, suggests, completes, predicts, and increasingly speaks in a tone of authority. The search engine was already powerful because it ranked the web. AI becomes more powerful because it can compress the web into a single answer.

This transition is enormous. A list of links still preserves some visible plurality. The user can compare sources, open different pages, examine disagreement, and sense the diversity of the web. An AI-generated answer may remove that visible struggle. It can make knowledge feel settled before the reader sees the conflict behind it. The danger is not only error. The danger is smoothness. A fluent answer can hide uncertainty more effectively than a messy search page.

Google’s long-term position in AI matters because AI rests on data, computing power, infrastructure, user behavior, and distribution. The company does not enter AI as a beginner. It brings decades of experience in organizing information, processing language, selling ads, building cloud systems, and studying user intent. This does not guarantee moral wisdom. It guarantees a strategic advantage.

The old search engine asked, “What are you looking for?” The emerging AI system asks, “Would you like me to think with you, for you, before you, and perhaps instead of you?” That question is seductive because thinking is exhausting. Modern life produces too much information, too many choices, too many documents, too many headlines, and too many anxieties. A system that summarizes the world becomes attractive because the world has become unbearable.

But when machines summarize reality, the politics of summarization become central. What is included? What is omitted? Which sources count? Which languages dominate? Which assumptions guide the answer? Which commercial interests shape the interface? Which forms of knowledge become invisible because they are not easily machine-readable?

AI does not end the problem of Google’s power. It deepens it. Search-shaped access to information. AI may shape interpretation itself.

The Marketplace Inside the Mind

The modern internet is not only a library. It is a marketplace disguised as a library. Users come seeking knowledge and encounter ads, recommendations, subscriptions, sponsored content, products, services, courses, influencers, and ideological merchants. Google sits at the center of this transformation because search intent is commercially precious. A person searching is already moving toward action. That action can be educated, redirected, monetized, or captured.

This is why advertising through search became so powerful. Traditional advertising interrupts attention. Search advertising meets attention at the moment of desire. A billboard guesses. A search ad responds. A television commercial shouts at strangers. A search ad appears beside the intention. This is not merely better marketing. It is the industrialization of human intention.

The deeper issue is not that Google sells advertisements. Commerce is not evil by nature. The problem begins when the architecture of knowledge and the architecture of advertising become inseparable. When a system that organizes information also profits from directing attention, society must ask what kind of knowledge environment is being built. Can a civilization think clearly when the pathway to knowledge is also a marketplace for desire?

This does not mean every search result is corrupted or every advertisement is is manipulative. The issue is more subtle. When human attention becomes the primary battlefield of profit, every institution must compete inside an economy of visibility. Writers optimize. Publishers optimize. Businesses optimize. Political actors optimize. Religious groups optimize. Universities optimize. Even truth must learn the language of discoverability.

At that point, knowledge changes character. It is no longer enough for something to be true, deep, or important. It must also be searchable, clickable, structured, tagged, ranked, and promoted. The machine does not abolish thought. It pressures thought to dress itself in machine-friendly forms.

The modern marketplace is no longer outside the mind. It increasingly appears at the exact moment the mind begins to ask.

The Crisis of Memory

Human memory is fragile, selective, emotional, and interpretive. Digital memory is different. It stores without shame. It retrieves without mercy. It connects fragments that human beings might have forgotten. Google is part of a broader digital landscape in which forgetting becomes difficult and remembering is outsourced.

This seems like progress. Why should humanity forget? Why lose documents, names, places, images, conversations, and histories? Digital memory protects civilization from decay. It helps scholars, families, institutions, courts, journalists, and ordinary users. But memory without wisdom can become a burden. Not everything preserved is understood. Not everything retrievable is meaningful. Not everything remembered should govern the future.

Google’s role in memory is not only storage. It is retrieval. What society remembers is increasingly shaped by what can be found. A forgotten scandal may return through a search. A minor error may become permanent. A shallow article may outrank a deeper work. A simplified narrative may dominate because it is easier to index. A person may become trapped by old visibility. A community may become known through the most searchable version of itself, not the truest one.

This creates a new politics of memory. To control memory is to influence identity. Nations have always known this. That is why archives, monuments, textbooks, museums, and ceremonies matter. Google did not invent the politics of memory, but it transformed its scale. The archive is no longer only in state buildings. It is distributed across servers, platforms, cached pages, images, videos, maps, comments, and search results.

The human being now lives under a strange tension. On one side, modern life demands self-expression. On the other side, digital systems preserve expression beyond its original moment. The young become searchable before they become wise. The anger becomes permanent before they become calm. The mistaken become indexed before they become mature. The internet remembers faster than the human soul can grow.

This is one of the cruelest features of digital civilization. It promises freedom of expression, then turns expression into evidence.

Why People Still Trust Google

The critique of Google becomes weak when it fails to explain trust. People continue using Google not because they are stupid, but because Google usually works. This must be admitted clearly. The service is fast. The results are often useful. Maps are practical. Gmail is reliable. YouTube is immense. Search remains familiar. Google’s ecosystem reduces friction in everyday life.

A serious argument must begin from this truth. Modern dependency is built on competence. Google’s power is not accidental. It is earned, engineered, protected, expanded, and normalized. People trust systems that repeatedly solve problems. Each solved problem becomes another layer of loyalty. Over time, loyalty becomes habit. Habit becomes dependence. Dependence becomes culture.

This is why moral panic alone cannot defeat platform power. Telling people that Google is dangerous while offering no better alternative is like telling people that roads are dangerous while providing no paths. Critique without replacement becomes noise. People may agree in theory and continue in practice. The platform survives because it is woven into life.

The proper response is not nostalgic rejection. Humanity cannot return to a pre-digital world, and romanticizing that world serves no serious purpose. The task is more difficult: to build digital maturity inside a world that will remain digital. That means asking not only how to escape Google, but how to reduce dependency, diversify knowledge pathways, strengthen public-interest technology, demand accountability, teach critical search habits, protect data rights, and build institutions that do not collapse when algorithms change.

The question is not whether one should use Google. The question is how not to become intellectually governed by Google.

The Moral Problem: Convenience as Surrender

Every age has a moral weakness. The moral weakness of the digital age is convenience. Modern people will defend freedom in speeches and surrender it in settings. They will condemn surveillance and accept tracking for smoother service. They will praise independent thought and click the first answer. They will fear monopoly and use the default. They will demand privacy and store their lives in systems they do not understand.

This is not hypocrisy in the simple sense. It is exhaustion. The modern person is tired. Too many passwords, too many platforms, too many decisions, too much information, too much speed. Convenience becomes survival. The platform does not need to defeat human freedom. It only needs to offer relief from complexity.

That is why the struggle is moral, not merely technical. The user must recover the discipline of friction. Not all friction is bad. Some friction protects thought. Some friction slows desire. Some friction prevents manipulation. Some friction allows human beings to ask, “Why am I being shown this? Who benefits from this? What am I giving away? What am I not seeing?”

A frictionless society is not necessarily a free society. Freedom requires interruption. It requires the ability to pause before clicking, compare before believing, refuse before accepting, and think before being guided.

Google’s deepest power lies not in forcing people to obey, but in making obedience feel unnecessary to notice. The system works. The user continues. The question disappears. That disappearance is the victory.

Conclusion: The God We Built Because Thinking Became Too Heavy

Google is not God. But Google reveals what modern humanity now seeks from its gods: speed, certainty, memory, guidance, prediction, visibility, and relief from confusion. The ancient person sought salvation. The modern user seeks relevance. The ancient believer feared divine judgment. The modern creator fears algorithmic invisibility. The old pilgrim traveled to sacred places. The modern subject opens a browser and enters the temple of indexed reality.

The tragedy is not that Google became powerful. The tragedy is that human beings built a world in which such power became necessary. The internet became too vast, so Google organized it. Information became so abundant that Google ranked it. Desire became too profitable, so Google monetized it. Memory became too unstable, so Google stored it. Movement became too complex, so Google mapped it. Thought became too burdened, so AI began to summarize it.

Google is not an alien god imposed from outside history. Google is the child of modern civilization: born from curiosity, capitalism, engineering, impatience, ambition, laziness, brilliance, and the human hunger to know without waiting.

This is why the critique must be honest. Google has helped humanity. Google has also trained humanity to accept a dangerous bargain. The bargain says, “Give us your signals, and we will give you relevance.” Give us your habits, and we will give you convenience. Give us your questions, and we will give you answers. Give us your attention, and we will give you the world.

But the world given through a platform is never simply the world. It is the world arranged, ranked, filtered, optimized, and returned through an architecture of power.

The future of human freedom will not be decided only by whether Google becomes more regulated, more ethical, or more transparent. It will also be decided by whether human beings recover the courage to think beyond the first result. A free mind must know how to search but also how to doubt the search. A mature civilization must use tools, but also examine the tools that shape its imagination.

The search bar will remain. The question is whether the human being before it will remain sovereign.