Technology, Religion, and the Crisis of Human Interior Life

There is a growing illusion in contemporary discourse that what we are witnessing is merely a technological transition. That illusion must be rejected at the outset. What is unfolding is not simply the rise of artificial intelligence, nor the expansion of digital infrastructures, nor even the acceleration of global connectivity. It is, more fundamentally, the reconfiguration of the conditions under which human beings experience reality, produce meaning, and recognize themselves as subjects.

The planetary age marks the moment when the world ceases to function as an external horizon and begins to operate as an internal system. This distinction is not rhetorical. In earlier epochs, the world was something that human beings encountered—through travel, trade, conquest, or intellectual curiosity. Today, the world precedes encounter. It is already structured, already filtered, already mediated before it appears to consciousness. The human being no longer approaches reality; reality arrives pre-processed.

It is precisely within this transformation that cultural engineering emerges—not as a metaphor, but as a structural condition. Culture is no longer primarily inherited, negotiated, or contested within human communities. It is increasingly produced through systems that anticipate behavior, curate perception, and normalize specific patterns of thought. The question, therefore, is no longer how culture evolves, but how it is designed.

This shift cannot be understood without situating it within the intellectual horizon opened by Masa Depan Dunia: Manusia dalam Peradaban Planetari. The planetary condition, as articulated in that work, is not simply about scale but about integration—the fusion of technological systems with the epistemic and existential structures of human life. The planetary is the point at which humanity becomes inseparable from the infrastructures it creates. It is no longer possible to distinguish clearly between the human and the system, because the system becomes the condition of human experience.

Yet, if the planetary condition defines the space in which we now exist, it is the works of Michio Kaku that illuminate the trajectory toward which this condition is moving. In Physics of the Future, The Future of Humanity, and The Future of the Mind, Kaku presents a vision of technological advancement that extends far beyond mere innovation. He sketches a future in which consciousness itself becomes accessible to technological manipulation—where the mind is no longer an interior sanctuary but an interface.

At first glance, this appears as the ultimate triumph of human ingenuity. The capacity to map, enhance, and potentially transfer consciousness promises liberation from biological limitations. But this promise conceals a deeper transformation. Once the mind becomes accessible, it also becomes governable. What was once interior becomes exteriorized. What was once private becomes measurable. And what is measurable can, inevitably, be controlled.

Here, the optimism of technological futurism intersects with the anxiety of philosophical anthropology. The question is no longer whether we can extend human capabilities, but whether the extension of those capabilities will dissolve the very conditions that make human experience meaningful. If thought becomes programmable, then what becomes of freedom? If memory becomes editable, what becomes of identity? If emotion becomes predictable, what becomes of moral responsibility?

The work The Age of AI by Henry Kissinger, Eric Schmidt, and Daniel Huttenlocher confronts this question directly. It argues that artificial intelligence introduces a new epistemic actor into human history—one that does not merely assist human reasoning but begins to participate in it. This marks a rupture in the history of knowledge. For the first time, human beings are no longer the sole producers of meaning.

This rupture is not simply technical; it is ontological. Knowledge, which has traditionally been grounded in human interpretation, becomes partially detached from the human. AI systems generate insights that are not fully transparent to their creators. They produce outputs without sharing the reasoning processes that led to them. In doing so, they introduce a form of opacity into the very structure of knowledge.

Opacity, however, is not neutral. It redistributes power. When knowledge is produced by systems that cannot be fully interrogated, authority shifts from those who interpret to those who control the systems. Cultural engineering operates precisely within this shift. It does not require the elimination of human agency; it requires its reconfiguration.

The implications of this transformation become even more evident when examined through the lens of AI 2041 by Kai-Fu Lee and Chen Qiufan. In their speculative scenarios, artificial intelligence does not impose itself violently upon society. Instead, it integrates seamlessly into everyday life, anticipating needs, shaping preferences, and constructing personalized realities.

This personalization is often celebrated as empowerment. Yet it carries within it a paradox. The more reality is tailored to individual preference, the less it is shared. Culture, which depends upon shared meaning, begins to fragment. Each individual inhabits a slightly different world, curated by algorithms that prioritize engagement over coherence. The result is not diversity in the classical sense, but epistemic isolation.

Isolation, in turn, amplifies the dynamics described in Jonathan Haidt’s The Righteous Mind. Haidt demonstrates that human moral judgment is primarily driven by intuition rather than reason. In environments that privilege speed and emotional response, this intuitive system becomes dominant. The digital ecosystem is precisely such an environment.

Religious behavior, when mediated through this system, undergoes a profound transformation. It becomes reactive rather than reflective. It becomes performative rather than contemplative. The sacred is no longer approached through disciplined interpretation but through instantaneous judgment. A verse, a symbol, or a statement can trigger outrage within seconds, bypassing the slow processes of understanding that traditionally define religious life.

This acceleration is not accidental. It is engineered. Platforms are designed to maximize engagement, and engagement is driven by emotional intensity. The system rewards what provokes a reaction. Over time, this reward structure reshapes behavior. It normalizes aggression, amplifies polarization, and erodes the capacity for nuance.

What emerges, then, is not simply a crisis of information, but a crisis of interiority. The human capacity for reflection—the ability to pause, to interpret, to hold complexity—begins to weaken. The interior life, which has historically been the foundation of both philosophical inquiry and religious devotion, becomes increasingly fragile.

Cultural engineering, in its deepest sense, is the restructuring of this interior space. It is the process by which systems shape not only what we think, but how we think, and ultimately, who we become.

The question that remains is whether this process is reversible. Can human beings reclaim the capacity for reflection within an environment that systematically undermines it? Can religious traditions adapt without surrendering their depth? Can knowledge remain meaningful when its production is partially automated?

These questions cannot be answered solely through technological solutions. They require a reexamination of the human condition itself. They require a recognition that the planetary age is not merely a new phase of history, but a new configuration of existence.

And within this configuration, the most urgent task is not to resist technology, but to resist the quiet erosion of the human interior—the space in which meaning is not engineered, but discovered.

The Planetary Turn: From World to System

The contemporary condition of humanity cannot be adequately described in the familiar language of globalization. What is unfolding is not merely the expansion of interconnectedness, but the emergence of a planetary system—an integrated, self-reinforcing architecture in which technology, knowledge, power, and meaning are co-produced in real time. The world is no longer simply the stage upon which human life unfolds; it has become an active system that shapes, filters, and structures human experience.

This shift finds its conceptual foundation in Masa Depan Dunia: Manusia dalam Peradaban Planetari, which frames the planetary not as a geographical expansion but as a transformation of human existence itself. The planetary condition dissolves the boundaries between local and global, between subject and system, between knowledge and infrastructure. In this environment, human beings do not merely inhabit the world—they are embedded within systems that pre-structure perception, cognition, and social interaction.

At the same time, works such as The Age of AI further advance this argument by suggesting that artificial intelligence is not merely a technological tool but a new epistemic actor. AI does not just process information; it participates in the production of meaning. It changes how knowledge is generated, validated, and distributed. This marks a civilizational rupture: knowledge is no longer exclusively human.

The planetary age, therefore, represents a convergence of two processes: the systemic integration of the world and the partial automation of cognition. Cultural engineering emerges precisely at this intersection.

The Future Imagined: Science, Technology, and the Expansion of Human Possibility

The future-oriented works of Michio Kaku—particularly Physics of the Future, The Future of Humanity, and The Future of the Mind—provide a crucial theoretical layer for understanding the technological horizon of the planetary age.

Kaku’s work maps the trajectory of scientific advancement toward a future in which the boundaries of human existence are radically expanded: brain-computer interfaces, artificial intelligence, space colonization, and the manipulation of consciousness itself. These developments are often framed as progress—an extension of human capability beyond biological limits.

Yet, when placed within the planetary framework, these possibilities reveal a deeper tension. The expansion of capability is simultaneously an expansion of control. Technologies that enhance cognition also make cognition observable, measurable, and ultimately governable. The brain becomes not only a site of thought but a site of data.

This is where the logic of cultural engineering intensifies. If the mind can be mapped, predicted, and influenced, then culture itself—understood as shared patterns of thought and meaning—becomes a programmable domain. The future of humanity, in this sense, is not merely about survival or expansion into space; it is about the redefinition of what it means to think, to feel, and to believe.

Kaku’s vision, when read critically, suggests that the future is not only technological but anthropological. It is about the transformation of the human condition itself.

AI and the Simulation of Reality: The Logic of Predictive Systems

The work AI 2041 introduces a speculative yet grounded exploration of how artificial intelligence will reshape everyday life. Through a combination of fiction and analysis, it presents a future in which AI systems anticipate human behavior, personalize reality, and construct environments tailored to individual preferences.

What emerges from this vision is not merely convenience but simulation. Reality itself becomes mediated through predictive systems. Individuals do not encounter the world directly; they encounter curated versions of it—filtered, ranked, and optimized.

This has profound implications for culture. Culture, traditionally understood as a shared system of meaning, becomes fragmented into personalized streams. Each individual inhabits a slightly different reality, shaped by algorithmic inference. The common ground necessary for collective understanding begins to erode.

At this point, cultural engineering becomes invisible. It is no longer imposed from above; it is embedded within the architecture of experience. The system does not tell people what to think. It shapes what they are likely to encounter, and therefore what they are likely to think.

The Moral Psychology of Division: Emotion, Intuition, and Religious Behavior

The transformation of religious behavior in the planetary age cannot be understood without engaging the moral psychology Jonathan Haidt articulates in The Righteous Mind.

Haidt’s central thesis—that human moral reasoning is primarily intuitive and emotional rather than rational—provides a critical lens for interpreting contemporary religious dynamics. In digital environments, this tendency is amplified. Platforms are designed to trigger emotional responses: outrage, fear, pride, and a sense of belonging. These responses are fast, automatic, and highly shareable.

This aligns directly with the distinction between fast and slow cognition, often called System 1 and System 2 thinking. In the planetary age, System 1 dominates. Religious content is consumed rapidly, reacted to instantly, and disseminated widely. Reflection becomes secondary.

The consequence is a transformation of religion itself. Religion becomes less a domain of contemplation and more a domain of reaction. It becomes a marker of identity, a trigger for emotional alignment, and, in many cases, a tool for polarization.

Haidt’s framework helps explain why religious conflicts in the digital age often escalate quickly and resist resolution. The issue is not merely a theological disagreement but a cognitive structure. When moral judgment is driven by intuition, argument alone is insufficient to resolve conflict.

The Automation of Knowledge: From Interpretation to Algorithm

One of the defining features of the planetary age is the automation of knowledge production. Knowledge is no longer solely generated through human interpretation; it is increasingly produced, filtered, and distributed by algorithms.

The Age of AI articulates this shift with clarity: artificial intelligence systems can generate insights, make decisions, and even produce content that mimics human reasoning. This represents a fundamental transformation of epistemology.

In traditional systems, knowledge was mediated through institutions—universities, religious authorities, and scholarly traditions. These institutions provided not only information but also context, interpretation, and ethical boundaries. In the automated environment, these mediations are weakened.

Algorithms prioritize engagement. They amplify what attracts attention, not necessarily what is true or meaningful. As a result, knowledge becomes fragmented, accelerated, and emotionally charged.

When applied to religion, this transformation becomes even more significant. Religious knowledge, once rooted in disciplined interpretation, becomes content—modular, shareable, and often decontextualized. The sacred is reduced to fragments that circulate within the attention economy.

This is not merely a technological shift; it is a transformation of authority. Authority moves from visible institutions to invisible systems. It becomes harder to identify, harder to challenge, and harder to regulate.

Cultural Engineering as Behavioral Architecture

Cultural engineering in the planetary age operates through behavioral architecture. It is not primarily about ideology but about design. Platforms are structured to maximize engagement, and engagement is driven by emotion.

This creates a feedback loop: emotional content generates engagement; engagement increases visibility; increased visibility reinforces emotional patterns. Over time, these patterns become normalized.

Culture, in this sense, is no longer simply transmitted; it is engineered through repetition and reinforcement. Individuals internalize patterns of reaction, perception, and judgment that are shaped by the environment.

The most significant aspect of this process is its subtlety. It does not require coercion. It operates through incentives. It makes certain behaviors feel natural, even inevitable.

This is why cultural engineering is difficult to resist. It is embedded in everyday life. It shapes habits, not just beliefs.

Toward a New Social Structure: Power, Authority, and the Politics of Reality

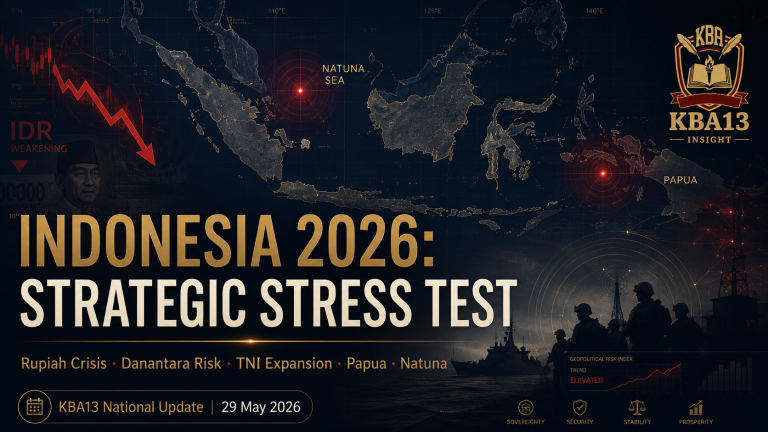

The transformations described above converge in a reconfiguration of social structure. Authority becomes hybrid—combining economic power, technological control, symbolic influence, and emotional mobilization.

In such a system, reality itself becomes contested. What people believe is shaped by what they see, and what they see is shaped by systems. Control over visibility becomes a form of power.

This leads to multiple possible configurations of society: a system dominated by a few powerful actors; a system characterized by widespread participation but uniform thinking; a system driven by charismatic figures; or a system governed by invisible infrastructures.

In each case, the central issue is not simply who holds power, but how power operates. In the planetary age, power is exercised through the management of perception.

Conclusion: The Question of Human Agency

The planetary age raises a fundamental question: are human beings still agents, capable of shaping their own cultural and spiritual realities, or are they becoming objects of systems designed to predict and influence behavior?

The works discussed—Masa Depan Dunia, The Age of AI, the futures mapped by Michio Kaku, the speculative realism of AI 2041, and the moral psychology of The Righteous Mind—collectively point toward a single conclusion: the human condition is being redefined.

This redefinition is not inevitable, but it is powerful. It demands a response—not only technological or political, but philosophical and spiritual.

The challenge of the planetary age is not simply to adapt to new systems, but to understand them, to question them, and, where necessary, to resist them.

Because the future of culture is no longer something that will emerge on its own.

It will be engineered.